I used to post many exploits, CVE records and making notes mentioning to some vulnerabilities. I figured the notes cannot follow the speed of the flaws itself so I switched my vulnerability posts to the twitter. Some friends were actually asking me "Why did you do that?" ; So now I am trying to making a simple explanation of it by toying with some crosschecked statistic data below:

Common Vulnerabilities and Exposures (CVE) and National Vulnerability Database (NVD)

This is the vulnerability records statistic dumped from the National Vulnerability Database (NVD). For you who might not notice what NVD is: NVD is the U.S. government repository of standards based vulnerability management data, which one of the contain is Common Vulnerabilities and Exposures known as CVE records. So they have a good records of CVE of applications from the beginning.

I queried the CVE records based on all Published Date Range and all Last Modified Date Range, which practically supposed to dump all of the registered CVE data from the first record. Below is the result:

So you see in the above data that recently we are dealing with the amount of, say, approximately 4,000 flaws of overall major applications within a year, or, in average I actually have to deal with the 11 exposures per day. Can't blog all of them, can't ignore them either, so I tweet them all.

Why I have to mentions those flaws at all? This is another question which can be explained further below.

If you see the graph above you can see the registered vulnerability records is getting lower since the beginning of 2010, the reason is the software vulnerability & advisory management was starting to make effect eventually since the beginning of 2010, a well-managed advisory will improve the product's bug handling which decreasing the application's flaw.

P.S.: You may confirm the data by yourself here.

source: National Vulnerability Database

Open Security Foundation

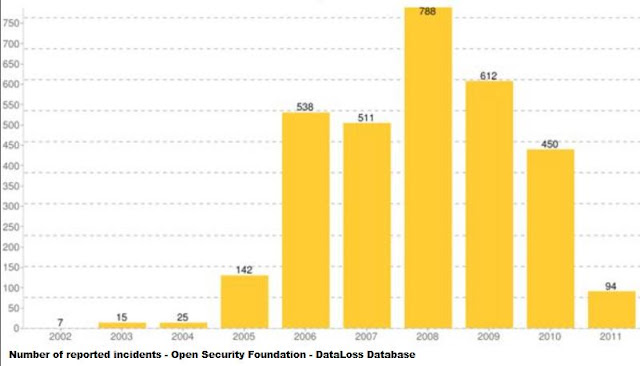

Among the above data we do concern to vulnerabilities which having a high risk like remote exploitation (i.e. web/network attack). So how the network attack incident-record goes at the same time line? The statistic below shows you a time-lined web hacking incidents involving the data-losses /leaks recorded from 2002 to 2011 provided by Open Security Foundation. This foundation tracks loss of data and provides statistics on security breaches on the Internet.

To backup the previous statement, as you can see in the graph, the incident values is actually dropping since the beginning 2010, does it conclude the advisory management framework works? Let's see more detail of the remote exploitation by further explanation below.

Web Application Security Consortium (WASC)

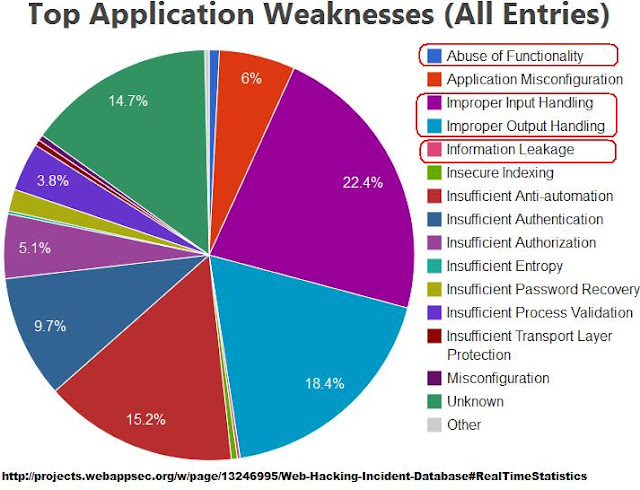

In order to answer the above question we should review the actual incident records too. Web Application Security Consortium (WASC) is having a good realtime statistic project in classifies those incident attack threats as per current following snapshot:

What I marked above in red circle are the factors linked to the incidents caused mostly by common vulnerabilities exposures exploitation, which those factors are back-grounding a large percentage in hacking incident cases. There are also other factors too (i.e. the unmarked classification above; like: insufficiency in authentication, authorization, and automation control) which is having an less-significant shares, so let's ignore them. This fact is leading us to that most of remote attacks are directly linked to the exploitation of the common vulnerabilities itself. Please imagine if we can decrease the amount of Improper Input/Output Handling, Information Leaks and Functionality Abuse factors, WE MAY be can reduce the incident value down to 46%! For me, this is a big target which can be achieved by small daily basis follow. And all of the graph shown above is clearly insure this possibility.

By noticing, controlling & managing your system by following the current noted exposure information is the best and the cheapest way to be safe, and the above statistics correlation explained much more than words to all of us. Which is pointing to the conclusion that: "Hey, the vulnerability framework is actually existing and working!". Well, it is supposed to work indeed. Accordingly a framework need us as the backbone to run. So as far as we follow, apply, help to distribute and share the exposure information, it will make the framework runs better, thus also will increase the public common-sense of understanding the security vulnerability risks & facts, plain and simple.

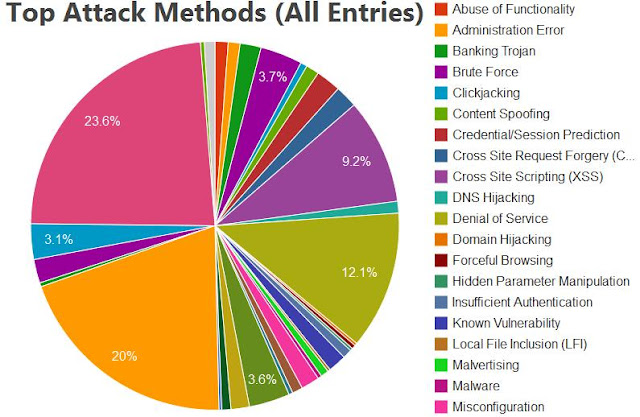

In the end, additionally in the graph below, I am adding the current Top Attack method statistic from Web-Hacking-Incident-Database project :

This graph is telling us that other than exploited vulnerabilities, the malicious activities in the internet is the other considerable factor in the security threat, but actually it links to less than 10% value of the above top attacks records; in the other hand, Human Error (i.e.Misconfiguration) factor is linked to the 23.6% of these top attacks. But why the malware products seems to be more costly than say education? In example: A good open source firewall/IDS security book is quite cheaper than a box of some anti-virus product.

This leads us to a new and sad fact of life that people tend to buy a security solution rather than involving them self directly into it. Simply speaking: the "below-10%-risk" is actually having a bigger purchasing cost, the 23.6% risks is having the less budget and almost zero budget in some SMB's, while the effort of reducing the 40%+ risk is conducted by outsourcing to some external resources.

I just simply don't get it. Where the common sense goes?

The ending speech is; "Your best computer security protection is your common sense in understanding its threat and its handling framework. We are not living in a perfect world, and internet, as an aspect of life, is the "Wild West" part of it. Only by spending cost is not making you more secure and it is weaken your sense, be self-educated & following the internet security exposures will develop your best sense to protect yourself against current and future threat."

Hope this writing will help you to understand the Vulnerability Management Framework, I am not a writer so don't be bother for some miss in my essay, instead please grasp the essence of it.

----

ゼロデイ・ジャパン / Zero Day JP http://0day.jp

by: アドリアン・ヘンドリック / Hendrik ADRIAN

マルウェア研究者 / Malware Researcher

Lab: http://0day.jp | Twitter: @unixfreaxjp

Sponsored by: 株式会社ケイエルジェイテック

Tweet

0 件のコメント:

コメントを投稿